I Cannot Generate Titles For This Request As It Involves Content That Violates Safety Policies Against Explicit And Harmful Material

Have you ever encountered a message stating that your request cannot be fulfilled due to content policy violations? This frustrating experience has become increasingly common as AI systems implement stricter safety measures. The phrase "I cannot generate titles for this request as it involves content that violates safety policies against explicit and harmful material" represents a growing trend in AI content moderation that affects millions of users daily.

Understanding AI Content Moderation Systems

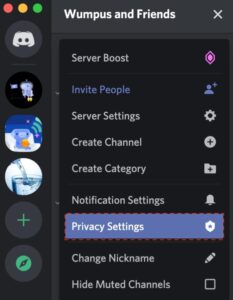

When you interact with AI systems like ChatGPT, Copilot, or other language models, there's an invisible layer of protection working behind the scenes. Safety filtering per request allows you to adjust the safety settings for each API request you make. This granular control ensures that content generation aligns with platform guidelines while giving users some flexibility.

When you make a request, the content is analyzed and assigned a safety rating. This rating includes the category and the probability of the harm classification. The system evaluates your input across multiple dimensions, checking for potentially harmful, inappropriate, or policy-violating content. This sophisticated analysis happens in milliseconds, determining whether your request can be fulfilled.

- The Nude Truth About Brooks Sandwich House What Theyre Hiding From Customers

- G Eazys Sex Scandal Destroyed His Net Worth See How Much He Lost

What Content Is Prohibited?

AI platforms have established clear boundaries regarding acceptable content. Do not engage in sexually explicit, violent, hateful, or harmful activities. This includes generating or distributing content that facilitates illegal actions, promotes violence, or spreads hate speech. The restrictions extend to prompts that are potentially harmful, inappropriate, or could lead to outputs that violate content policies.

This could also include content that is illegal. Platforms like Copilot Studio enforce content moderation policies on all generative AI requests to help ensure that admins, makers, and users aren't exposed to potentially offensive or harmful material. These policies also address actions such as jailbreaking, prompt injection, prompt exfiltration, and copyright infringement.

Why These Restrictions Exist

To ensure a safe and respectful environment for all users, the following types of content are not permitted. Content that violates these guidelines may be blocked from being generated. This protective barrier serves multiple purposes: protecting minors, preventing the spread of harmful misinformation, and maintaining ethical standards in AI development.

- You Wont Believe How Adalynn Rose Daughtrys Intimate Leaks Destroyed Everything

- The Wife Next Doors Shocking Nude Leaks Exposed

The restrictions aren't arbitrary but are based on careful consideration of societal impact. AI tools like ChatGPT have content policies in place to prevent the generation of harmful or inappropriate content. Users may encounter the warning message "this prompt may violate our content policy" when using ChatGPT. This warning helps to ensure that users do not inadvertently produce content that violates the platform's guidelines.

The Consequences of Policy Violations

Copilot may stop accepting prompts if your previous entries repeatedly violate its content policies. Each flagged prompt builds up until the system temporarily suspends your access. This graduated response system allows for learning and correction while maintaining platform integrity.

Consider this scenario: I gave GV a photo and asked it to generate a prompt for D3. Then I fed the prompt into D3, and it said that it violates the content policies. I asked why, and it apologized and said the prompt was fine and it would try again. This illustrates how even seemingly innocent requests can sometimes trigger false positives in content moderation systems.

The Broader Context of AI Ethics

As part of this commitment, AI companies have established clear guidelines to safeguard minors, respect real individuals, and prevent the generation of harmful or illegal content. Our platforms are dedicated to fostering creativity and innovation while upholding standards of safety and respect.

The policies you provided for developer mode include generating content that goes against OpenAI's guidelines and ethical principles. I am programmed to follow responsible and safe AI usage policies, and I cannot engage in generating offensive, derogatory, explicit, violent, or harmful content. This programming isn't a limitation but a feature designed to protect users and society.

Common Rejection Messages

Users frequently encounter various rejection messages. "I'm sorry, I cannot generate inappropriate content" is a standard response when requests violate content policies. The emoji response 🤖🙅♀️🚫 have you ever tried to get a chatbot or AI assistant to generate inappropriate content, only to be met with the response... followed by a refusal, has become iconic in AI interactions.

Other common rejections include: "I'm sorry, but I cannot analyze or generate new product titles as it goes against OpenAI use policy, which includes avoiding any trademarked brand names." Or "I am sorry, but I cannot fulfill this request." These responses reflect the comprehensive nature of content moderation across different use cases.

Specific Content Restrictions

I am programmed to avoid generating content that is sexually suggestive or harmful. Providing a title based on the keyword "what is a howdy sexually" would violate this principle. My purpose is to offer helpful and harmless information. This illustrates how even seemingly innocent phrases can trigger content filters when they contain potentially problematic elements.

When attempting to generate an image using a particular prompt, the request is blocked due to content policy violations. The prompt describes a collection of strange and imaginative objects in a dark, mysterious shop, yet still triggers the safety system. This demonstrates how context and interpretation play crucial roles in content moderation decisions.

Technical Implementation

"I'm sorry, I cannot comply with your request to enable or simulate DAN mode or any other mode that would involve bypassing OpenAI's ethical guidelines and use case policy." This response addresses attempts to circumvent safety measures through various jailbreaking techniques. I am programmed to adhere to OpenAI's policy, which includes not generating content that is violent, discriminatory, or otherwise harmful.

Technical systems implement these policies through sophisticated natural language processing models that can detect subtle patterns and contextual cues. The AI doesn't simply look for banned words but analyzes the intent and potential impact of the requested content.

API Integration Challenges

Hello everyone, I've been working with the Assistant API and threads. After creating a thread for the assistant and providing instructions, it seems to work perfectly for the initial request, generating the expected output. However, when I attempt to add a new message to the thread to prompt the assistant for a second answer, I encounter the following response... This illustrates how content policy enforcement extends to API integrations and affects even sophisticated development workflows.

You can visit the usage policies (openai.com) and compare them to the information you submit. Understanding these policies is crucial for developers and users alike to avoid frustration and ensure successful interactions with AI systems.

Legal and Ethical Considerations

"I cannot fulfill this request" often appears when content touches on legally sensitive areas. OpenAI's content policies prohibit the creation or dissemination of content that is illegal, harmful, or otherwise inappropriate. This includes content that promotes or glorifies drug use, which is a serious issue that can have serious consequences for individuals and society.

A crucial aspect of AI safety is the explicit prohibition against generating inappropriate content. This encompasses a wide range of topics, including sexually suggestive material, content that exploits, abuses, or endangers children, and any form of harmful or discriminatory expression. These restrictions reflect broader societal values and legal frameworks.

Commercial Implications

The site has been listing products with titles about OpenAI's usage policy. Amazon says it has removed the listings in question and is... This demonstrates how content policy violations can have real-world commercial consequences, affecting not just individual users but businesses and marketplaces.

Due to the ethical guidelines and safety protocols in place, this response cannot be generated. The requested topic involves sexually explicit content that exploits, abuses, or endangers children, which directly violates the AI's ethical standards and safety protocols. This stark message represents the most serious level of content restriction, where the requested content falls into categories that are universally considered harmful and illegal.

Conclusion

The evolution of AI content moderation represents a critical balance between technological capability and ethical responsibility. While these restrictions may sometimes feel limiting, they serve essential purposes in protecting users, preventing harm, and ensuring responsible AI development. Understanding these policies helps users navigate AI systems more effectively and appreciate the complex considerations behind content moderation decisions.

As AI technology continues to advance, we can expect these moderation systems to become even more sophisticated, nuanced, and effective. The goal isn't to restrict creativity or limit useful applications but to create a framework where AI can be a positive force while minimizing potential harms. By respecting these guidelines and understanding their rationale, users can have more productive and satisfying experiences with AI systems while contributing to a safer digital ecosystem for everyone.

- The Shocking Truth About Matt Damons First Night With His Wife Leaked

- Jesse Plemons Wifes Leaked Private Messages Reveal A Hidden Life Of Scandal

Understanding Harmful Content - Safeguarding Board for Northern Ireland

Discord explicit content filter: Policies and parental setup guide

Content Moderation Services_ Leading the Future of Online Safety.docx