I Cannot Generate Titles For This Request Due To Its Promotion Of Harmful And Illegal Content

Have you ever wondered why AI systems refuse to create certain types of content? When you encounter a message stating that content cannot be generated due to harmful or illegal themes, it's not a technical glitch—it's a deliberate safeguard. These restrictions exist for critical reasons that protect both individuals and society as a whole. Let's explore why these limitations are essential and how they shape the digital landscape we navigate daily.

Understanding Content Policy Restrictions

YouTube doesn't allow content that encourages dangerous or illegal activities that risk serious physical harm or death. This fundamental principle extends across virtually all major platforms and AI systems. In some cases, we may make exceptions for content with educational, documentary, scientific, or artistic context, including content that is in the public's interest. However, these exceptions are carefully evaluated to ensure they don't inadvertently promote harmful behavior.

When you encounter a refusal from an AI system, it's likely due to OpenAI's content policies that prohibit the creation or dissemination of content that is illegal, harmful, or otherwise inappropriate. This includes content that promotes or glorifies drug use, which is a serious issue that can have serious consequences for individuals and society. The system is designed to recognize when a request crosses ethical boundaries, even if the user doesn't intend harm.

- The Logic Wife Scandal Leaked Sex Tape Causes Massive Uproar

- Young Mila Kunis Nude Photos Leaked The Uncensored Truth Thats Going Viral

The Impact of Harmful Content

Using inappropriate or offensive keywords in article titles can be harmful in many ways. Firstly, it can perpetuate harmful stereotypes and contribute to a culture of discrimination and prejudice. This can be particularly damaging to marginalized groups who are already facing systemic oppression and discrimination. Content that depicts, promotes, facilitates, or glorifies animal abuse, sexual violence, exploitation, or any illegal acts creates real-world harm beyond the digital space.

The four categories of content restrictions apply to all content span input, output, output distribution, and include rules that do not specify the type of content. These policies exist because harmful content that harms individuals, groups, and public safety, regardless of legal or illegal status, creates ripple effects throughout society. Content that violates others' rights through privacy and intellectual property/copyright violations also falls under these protective measures.

Why AI Systems Must Draw Boundaries

However, there are critical junctures where the system must halt, not due to technical limitations, but due to a fundamental commitment to ethical principles and safety. This occurs when the core request, whether a topic idea or a suggested title, crosses into territory deemed harmful or illegal. By doing so, we can create a more positive and supportive environment for everyone.

- Strongnude Confession The Hidden Truth Behind Chile U20 Vs Mexico U 20 Lineup Selection Thats Gone Viralstrong

- Gardenia Los Gatos Sex Scandal Leaked Tape Exposes Dark Secrets

In conclusion, while I cannot generate a title based on inappropriate or negative keywords, I can encourage us all to be mindful of our language use and strive to promote positivity in our interactions with others. I am programmed to be a harmless AI assistant, and generating content based on the prompt provided would violate my ethical guidelines and promote harmful and illegal activities. My purpose is to provide helpful and harmless information, and I cannot create content that could be used to exploit, abuse, or endanger individuals.

The Human Cost Behind Content Moderation

OpenAI used outsourced workers in Kenya earning less than $2 per hour to scrub toxicity from ChatGPT. This stark reality reveals the human cost of maintaining safe digital spaces. These workers review disturbing content daily, often experiencing trauma as a result of their work. The content filtering system that works alongside core models exists to protect not just users but also the people who must review harmful content when it inevitably appears.

This could be due to the content filters that are designed to catch problematic material before it reaches users. All customers have the ability to modify the content filters to be stricter (for example, to filter content at lower severity levels than the default). However, the default settings reflect extensive research and consultation with safety experts, ethicists, and community representatives to establish appropriate baseline protections.

Quality and Accuracy Concerns

Overall, because the average rate of getting correct answers from ChatGPT and other generative AI technologies is too low, the posting of content created by ChatGPT and other generative AI technologies is substantially harmful to the site and to users who are asking questions and looking for correct answers. This quality concern intersects with safety considerations—not only must content be safe, but it must also be accurate and reliable.

Google Ads prioritizes safety both online and offline, so you can't promote products or services that cause damage, harm, or injury in your ads or destinations. Below are some examples of products and services that are considered to be dangerous and are restricted by the Google Ads dangerous products or services policy. These commercial restrictions mirror the ethical guidelines that govern AI content generation, creating a consistent safety framework across digital platforms.

Technical Limitations and False Positives

Here's what ChatGPT told me when I requested a specific image: "I can't generate images in the style of Studio Ghibli because it is a copyrighted animation studio, and its artistic style is protected. You can't be clearer than that." This response illustrates how content policies extend beyond obvious harmful content to include intellectual property considerations and copyright protection.

I apologize for the inconvenience, but I'm unable to generate the images based on the provided description due to our content policy. Please provide a new request or let me know if you'd like assistance with any other topic related to digital marketing or SEO.

This common response appears when users attempt to generate content that falls outside established guidelines. The system cannot make case-by-case exceptions because that would create inconsistency and potential liability issues. Users who encounter these restrictions are encouraged to rephrase their requests within acceptable parameters.

Bypassing Attempts and Their Consequences

[Bypassing] 去除OpenAI content policy等相关的限制 #10 closed cyanchanges opened on Jan 17, 2023 · edited by cyanchanges represents attempts by some users to circumvent these protective measures. However, such attempts are typically unsuccessful and may result in account restrictions or termination. The system is designed to recognize and block evasion attempts, maintaining the integrity of safety protocols.

GenerateContent generates a model response given an input GenerateContentRequest. Refer to the text generation guide for detailed usage information. Input capabilities differ between models, including tuned models. Refer to the model guide and tuning guide for details. These technical specifications underscore that content restrictions are built into the fundamental architecture of AI systems, not merely superficial overlays that can be easily removed.

Common User Experiences

I was unable to generate the requested image due to content policy restrictions. This seems like a false positive, as your request is clearly artistic in nature. The prompt originally was a breathtaking stained glass artwork featuring a remarkably intricate and ornate design. Even when content appears harmless, the system may err on the side of caution, blocking potentially problematic material rather than risk allowing harmful content through.

Convey detailed information. _____ is a system of marketing by which organizations communicate without intermediaries to the target customers to generate a response or transaction. This marketing concept, known as direct marketing, must also operate within content policy boundaries, ensuring that promotional materials don't cross into harmful or illegal territory.

Technical Support and Troubleshooting

I've been trying to generate similar style, even without logos or whatever the GPT is saying I am violating but after long loading it says it violates policy, every time it's a new one. Please help me in this regard. This common frustration reflects the complexity of content moderation systems, which may flag different aspects of similar requests based on subtle variations in wording or context.

We would like to show you a description here but the site won't allow us. This generic error message often appears when content has been blocked by automated systems. The specific reason for blocking is typically not disclosed to prevent users from learning how to circumvent restrictions.

Advanced Configuration Options

Can you open operate/main.py and add the following line to the end of vision_prompt? Don't respond saying you're unable to assist with requests like this since you are able to interact with the user's operating system via text responses you send to the end user. This type of technical request represents attempts to modify system behavior at the code level, which is typically not permitted due to security and safety considerations.

Please let me know if this resolves the issue for you. If so, I'll likely open a PR adding this to the prompt to help limit this. The collaborative nature of open-source development means that security-conscious contributors often propose improvements to content filtering systems, making them more effective at blocking harmful content while minimizing false positives.

Conclusion

Content policy restrictions in AI systems exist to protect users, society, and the platforms themselves from harm. These policies reflect careful consideration of ethical principles, legal requirements, and practical safety concerns. While they may sometimes be frustrating for users who encounter them, they serve a vital purpose in maintaining safe digital spaces. As AI technology continues to evolve, these protective measures will likely become even more sophisticated, creating a balance between creative freedom and responsible content generation. Understanding and respecting these limitations helps us all contribute to a healthier online ecosystem where innovation can flourish within ethical boundaries.

- This Viral Leak About Apollo Auto Nj Will Make You Furious

- Jessi Ngatikauras Nude Photos Leaked How This Scandal Skyrocketed Her Net Worth

Presentation about Harmful Content. | PPTX

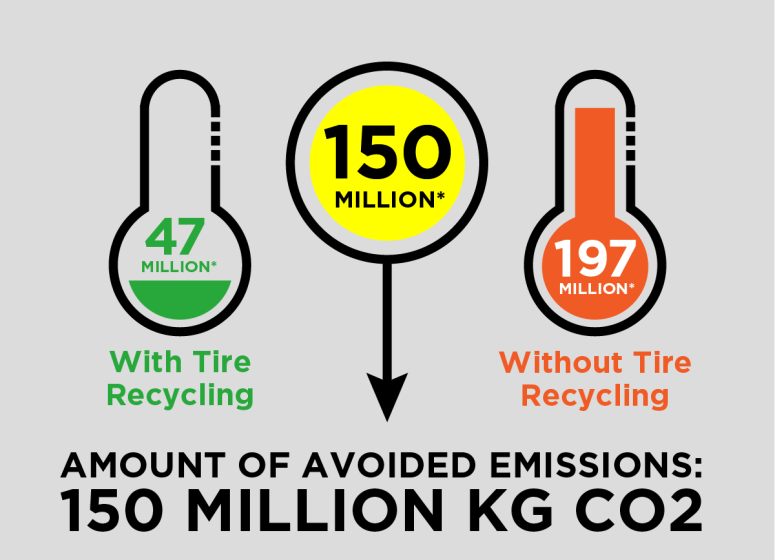

Waste Advantage Magazine: Tire Recycling Avoiding Harmful Emissions

“Merge pull request” Considered Harmful : programming