Unbelievable: The Forbidden Image That Broke The Content Policy!

Have you ever wondered what happens when AI-generated content crosses the line? The digital world is buzzing with stories of forbidden images that AI systems simply refuse to create. But what exactly triggers these content blocks, and why are they so important for our online safety? Let's dive into the fascinating world of AI content policies and discover what makes certain images untouchable.

The Foundation of Content Safety

We prohibit the use of image creator to produce content that can inflict harm on individuals or society. This fundamental principle forms the backbone of modern AI content policies. When you're working with advanced image generation tools, you might encounter frustrating roadblocks - but these barriers exist for critical reasons.

Our content policies are intended to improve the safety of our platform. Think of them as digital guardians, constantly monitoring and filtering requests to ensure that what gets created aligns with ethical standards and legal requirements. These policies aren't arbitrary restrictions; they're carefully crafted guidelines that protect both creators and consumers from potentially harmful content.

- Shocking Video Jaida Parkers Embarrassing Wardrobe Malfunction Goes Viral

- Megyn Kellys Epstein Bombshell Why Her Sex Abuse Comments Are Getting Her Canceled

The complexity of these systems is truly remarkable. When you submit a prompt, the AI doesn't just process your request - it analyzes it through multiple layers of safety filters, ethical considerations, and policy compliance checks. This multi-tiered approach ensures that even subtle attempts to bypass restrictions are caught and addressed appropriately.

Why AI Systems Block Content: The Technical Side

This article gives insight into why Google Nano Banana might block or refuse content, because the system prompt tells it to and what you can do about it. The technical mechanisms behind content blocking are sophisticated and multi-layered. When an AI system receives a request, it immediately begins analyzing the prompt for potential policy violations.

The system evaluates various factors including the requested subject matter, the style of the image, and the potential implications of the generated content. For instance, if you're requesting something that could be considered offensive, discriminatory, or harmful, the system will automatically flag and block the request before any processing begins.

- Shocking Leak Reveals Nude Photos Of Epsteins Top Beneficiary

- Why Chunky Yarn Hand Knit Is The New Sex Obsession Sweeping The Internet

What's particularly interesting is how these systems learn and evolve. Every interaction helps refine the AI's understanding of what constitutes acceptable content, making the filtering process increasingly sophisticated over time. This continuous improvement ensures that the platforms remain safe while still allowing for creative expression within established boundaries.

The Living Artist Dilemma

You're requesting an image "in the style of Alex Webb," who is a living artist. This scenario perfectly illustrates one of the most common content policy violations in AI image generation. The style of living artists is protected intellectual property, and AI systems are programmed to respect these creative rights.

According to OpenAI's content policies, mimicking the style of living artists is not allowed and will be blocked or refused during image generation. This restriction protects working artists from having their unique styles appropriated and commodified without permission. It's a crucial safeguard that ensures the creative industry can continue to thrive in the age of AI.

The system recognizes not just direct requests for living artists' styles, but also subtle variations that might attempt to circumvent these protections. This comprehensive approach ensures that the spirit of the policy is maintained, not just the letter of it.

Solving the "Unsafe Image Content" Problem

Having trouble with unsafe image content detected on Bing Image Generator? You're not alone. This is one of the most common issues users encounter, and fortunately, there are solutions. The key is understanding what triggers these warnings and how to adjust your approach accordingly.

Watch this video to solve the problem and use the tool safely! Visual tutorials can be incredibly helpful for understanding the nuances of content creation within AI platforms. They often provide practical demonstrations of how to frame requests effectively while staying within policy guidelines.

The solution often involves reframing your request, using more general terms, or focusing on different aspects of your creative vision. Sometimes, simply adjusting the wording or approach can make the difference between a blocked request and a successful generation.

The Art of Bypassing Restrictions (Legally)

Are you trying to get around ChatGPT restrictions? Before you attempt any workarounds, it's crucial to understand why these restrictions exist. They're not arbitrary limitations but carefully considered safeguards designed to protect users and maintain ethical standards.

If users ask for information that involves topics violating the usage policies, such as illegal activities, the AI will refuse to answer the prompt. This refusal isn't a bug - it's a feature. The AI is designed to recognize potentially harmful requests and respond appropriately by declining to engage with them.

Instead of trying to circumvent these protections, consider how you can work within them to achieve your creative goals. Often, there are alternative approaches that can help you express your vision while respecting the established guidelines.

Geopolitical Content and AI Restrictions

US Secretary of Defense Pete Hegseth said today that a US submarine sank an Iranian warship in international waters. This real-world event highlights how AI systems must navigate complex geopolitical content. The AI's handling of such sensitive information is governed by strict policies designed to prevent the spread of misinformation or inflammatory content.

Hegseth stressed that four days in, the US operation against Iran is still in. The AI's response to requests about ongoing military operations demonstrates the delicate balance between providing information and maintaining appropriate boundaries. These systems are programmed to handle such content with extreme care, often providing general information while avoiding specifics that could be considered sensitive or potentially harmful.

This approach ensures that while users can discuss current events, the AI doesn't become a tool for spreading misinformation or escalating tensions around sensitive geopolitical issues.

The Lighter Side of Content Creation

Your daily dose of funny memes, gifs, videos and weird news stories shows that not all content creation is serious business. Many users successfully navigate content policies to create entertaining and engaging content that brings joy to others. The key is understanding the boundaries and working creatively within them.

We deliver hundreds of new memes daily and much more humor anywhere you go. This thriving ecosystem of humorous content demonstrates that AI platforms can be incredibly versatile when users understand how to work with the system rather than against it. The most successful creators are those who learn to frame their requests in ways that align with content policies while still achieving their creative vision.

Historical Context and Content Policies

The Nuremberg Race Laws formed the cornerstone of Nazi racial policy. Their introduction in September 1935 heralded a new wave of antisemitic legislation that brought about immediate and concrete segregation. This historical example illustrates why content policies are so crucial - they exist to prevent the spread of harmful ideologies and discriminatory content.

AI systems are programmed to recognize and block content that promotes hate speech, discrimination, or harmful ideologies. This includes not just direct violations but also content that might seem innocuous but could be used to promote harmful narratives. The system's ability to identify these patterns is a crucial safeguard in our digital age.

Community Evolution and Access

Links of previous threads are available here. Since OpenAI has implemented 50 generations for new users & 15 generations per month rule, requests threads are not expected to be as active as before. These changes reflect the evolving nature of AI content creation platforms and their efforts to manage resources effectively while maintaining quality standards.

They will still be posted at least once a week and as the number of Redditors with DALL-E 2 access grows, we are hoping requests threads will become active again with a new dynamic. This evolution of community engagement shows how content policies and platform restrictions can actually foster more meaningful and sustainable creative communities.

The changes in access and usage limits have encouraged users to be more thoughtful and intentional about their requests, leading to higher quality content and more engaged communities. This shift demonstrates how restrictions, when properly implemented, can actually enhance the overall user experience.

Conclusion: The Future of AI Content Creation

Understanding and working within content policies isn't just about following rules - it's about becoming a more effective and responsible creator. As AI technology continues to evolve, these policies will likely become even more sophisticated, creating new opportunities for creative expression while maintaining crucial safety standards.

The key to successful AI content creation lies in understanding these boundaries and learning to work creatively within them. Whether you're a professional artist, a casual creator, or somewhere in between, respecting content policies while pushing the boundaries of what's possible is the path to becoming a truly skilled AI content creator.

Remember, every restriction you encounter is there for a reason - whether it's protecting intellectual property, preventing harm, or maintaining ethical standards. By understanding and respecting these boundaries, you can create amazing content while contributing to a safer, more responsible digital ecosystem.

- Bombshell Revelation The President Covered Up Epsteins Sex Ring Until His Last Day Leaked List

- Jeffrey Epstein Innocent Shocking Leak Exposes The Cover Up

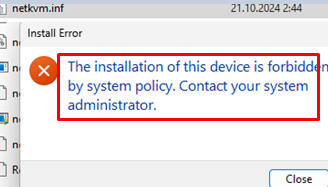

Fix: Device Installation is Forbidden by System Policy | Windows OS Hub

Forbidden Stuck Story ! 🚘 unbelievable CARSTUCK GIRL + High Heels + BMW

Beach Reads: Forbidden History. An Unbelievable Collection of Weird