I Cannot Generate Titles That Include Racist Slurs Or Promote Hate Speech

Have you ever wondered why platforms and content creators refuse to generate titles containing racist slurs or hate speech? This isn't about censorship—it's about creating a safer, more inclusive digital environment where everyone can participate without fear of harassment or discrimination. The refusal to create such content reflects a growing understanding of hate speech's harmful impact and the responsibility we all share in combating it.

When we encounter requests that involve hate speech or racist language, the appropriate response isn't to fulfill them but to educate about why they're problematic. This article explores the complex landscape of hate speech, its definitions, impacts, and the crucial role of context in determining whether potentially offensive content can be justified for legitimate purposes.

Understanding Hate Speech and Its Context

Hate speech represents any form of expression through which speakers intend to vilify, humiliate, or incite hatred against a group or class of persons based on race, religion, skin color, sexual identity, gender identity, ethnicity, disability, or national origin. This definition encompasses both overt expressions of hate, such as the vitriolic use of slurs, and more covert forms that might appear subtle but still reinforce harmful stereotypes.

- Trump Just Dropped A Nuclear Bomb Epsteins Sex Files Leaked Nude Photos And Orgies Exposed

- Leaked The Funniest Group Chat Names That Accidentally Included Nude And Sex Now Viral

For educational, documentary, scientific, or artistic content that includes hate speech, this context must appear in the images or audio of the video itself. Providing it in the title or description alone isn't sufficient—the context needs to be embedded within the actual content. This requirement ensures viewers understand the purpose behind including potentially offensive material and can make informed decisions about engaging with it.

Discussions about social and political issues are welcome, but they must be respectful and constructive. The distinction between legitimate discourse and hate speech often lies in the intent and impact of the expression. While criticism of ideas, policies, or actions is generally protected speech, attacking individuals or groups based on their protected characteristics crosses into harmful territory.

The Harm of Hate Speech and Its Evolution

There is an enduring and harmful notion that technology is neutral and objective, according to Ashwini K.P. during her interactive dialogue for the launch of her new report at the Human Rights Council's 56th session in Geneva, Switzerland. This misconception fails to recognize that algorithms, platforms, and even seemingly neutral tools can perpetuate bias and amplify hate speech if not carefully designed and monitored.

- Explosive New Epstein Israel Leak Nude Photos And Hidden Tapes Revealed

- New Leaked Documents Reveal Epsteins Luxurious House Arrest Youll Be Outraged

The use of racist language must be editorially justified and signposted to ensure it meets audience expectations wherever it appears. This editorial justification requires creators to demonstrate that the inclusion of such language serves a clear purpose—whether educational, historical, or artistic—and that its use is handled with sensitivity and appropriate context. Without this justification, such content risks causing harm without contributing meaningful value.

We prohibit targeting others with repeated slurs, tropes, or other content that intends to degrade or reinforce negative or harmful stereotypes about a protected category. This prohibition recognizes that repeated exposure to hate speech can have cumulative psychological effects, creating hostile environments that silence marginalized voices and discourage participation in public discourse.

Defining and Identifying Hate Speech

How to define hate speech remains a complex philosophical and legal challenge. Different jurisdictions and platforms have varying standards, but most agree that hate speech targets protected characteristics and aims to demean or incite hatred against specific groups. The challenge lies in balancing free expression with protection from harm, particularly in diverse societies where different groups may have conflicting sensitivities.

What are the plausible harms of hate speech? Research demonstrates multiple levels of harm, from the immediate psychological impact on targets to the broader societal effects of normalizing discrimination. Hate speech can create hostile environments that limit people's freedom to participate in public life, contribute to real-world violence, and reinforce systemic inequalities. The harm extends beyond individuals to communities and society as a whole.

An account of hate speech might include both overt expressions of hate, such as the vitriolic use of slurs, as well as more covert forms that might appear as dog whistles or coded language. This comprehensive approach recognizes that hate speech evolves and adapts, often becoming more subtle as overt forms face greater social and legal consequences.

Content Moderation and Classification

First, annotators read sample social media comments and rate each comment for hatefulness. This human review process forms the foundation of content moderation systems, providing the nuanced understanding that automated tools often lack. Human annotators can recognize context, sarcasm, and cultural references that might confuse algorithms.

Rather than marking comments as either "hate speech" or "not hate speech," the annotators are asked a series of questions about each comment. This nuanced approach acknowledges that hate speech exists on a spectrum and that context matters significantly. Questions might explore whether the comment targets protected characteristics, whether it expresses contempt or disgust, and whether it could incite hatred or violence.

This multi-layered evaluation process helps create more accurate classification systems that can better serve both users and platforms. By avoiding binary classifications, these systems can better handle edge cases and provide more meaningful data for improving content moderation policies.

Language Evolution and Sensitivity

Language is constantly evolving as it adapts to cultural and social changes. Terms that were once commonly used may become recognized as offensive, while new terminology emerges to better represent diverse communities. This evolution reflects changing social norms and growing awareness of how language can impact different groups.

Choosing the most appropriate term or phrase can be tricky, as there can be a lack of consensus among scholars, activists, and the public about a term or phrase's use. This lack of consensus doesn't mean we should avoid the conversation—rather, it highlights the importance of ongoing dialogue and willingness to learn and adapt as understanding evolves.

But certain words, whether used intentionally or unintentionally, can exclude individuals or leave someone feeling like perhaps they don't belong or aren't valued. This exclusion can be particularly harmful in professional, educational, or public contexts where everyone should feel welcome to participate fully.

Hate Speech vs. Hate Crimes

Hate speech is any form of expression through which speakers intend to vilify, humiliate, or incite hatred against a group or a class of persons on the basis of race, religion, skin color, sexual identity, gender identity, ethnicity, disability, or national origin. While hate speech is primarily about expression, hate crimes are overt acts that can include acts of violence against persons or property, violation or deprivation of civil rights.

The distinction between hate speech and hate crimes is important because it affects how these behaviors are addressed legally and socially. Hate speech, while harmful, is often protected under free speech principles in many jurisdictions, though it may violate platform policies. Hate crimes, however, are criminal acts that can be prosecuted regardless of whether they involve speech.

Understanding this distinction helps clarify appropriate responses to different forms of hate-based behavior. While we might counter hate speech through education, counter-speech, and platform moderation, hate crimes require law enforcement intervention and legal consequences.

Creating Effective Content

Use this free AI tool to generate catchy titles for your content. Write creative headlines and boost your search visibility. These tools can help content creators develop engaging titles that attract readers while avoiding problematic language. However, it's crucial to review AI-generated suggestions carefully to ensure they align with your values and don't inadvertently include harmful content.

When using AI tools for content creation, remember that they reflect the data they were trained on, which may include biased or problematic content. Always review and edit AI-generated suggestions to ensure they meet your standards for inclusivity and respect. The goal is to create content that engages audiences without causing harm or perpetuating stereotypes.

The best titles and headlines capture attention while accurately representing the content that follows. They should be clear, compelling, and relevant to your target audience. Whether you're writing about serious topics like hate speech or creating content for entertainment, thoughtful headline creation can significantly impact your content's success.

Conclusion

Understanding and addressing hate speech requires ongoing education, thoughtful policy development, and commitment from individuals, organizations, and platforms. As language and social norms evolve, our approaches to identifying and responding to hate speech must also adapt. The goal isn't to restrict free expression but to create environments where everyone can participate without fear of harassment or discrimination.

By recognizing the harm that hate speech causes, understanding its various forms, and implementing appropriate responses, we can work toward more inclusive and respectful online and offline communities. This includes supporting victims of hate speech, educating potential perpetrators, and creating systems that promote positive interaction while minimizing harm.

The refusal to generate titles containing racist slurs or promote hate speech represents a small but important step toward this goal. It reflects a growing recognition that our words matter and that we all share responsibility for creating a more just and equitable society. As we continue to navigate these complex issues, maintaining open dialogue and willingness to learn will be essential for progress.

- What Does Afk Mean The Nude Secret Thats Breaking The Internet

- What This Golden Retriever Chose For His Birthday Will Shock You

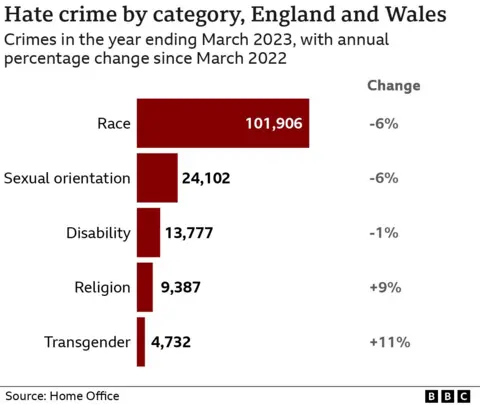

Trans hate crime rises 11% in past year in England and Wales

No hate crime charges filed against man who yelled racist slurs at Utah

Leaked Young Republican chats include hundreds of racist, homophobic